Here's a number that should keep every CMO up at night: Gemini mentions brands 32.5% of the time, while ChatGPT only mentions them 15.6%. That's a 2x gap between just two AI engines. Now imagine you're only checking ChatGPT. You're missing more than half the AI-driven brand discovery happening on the web.

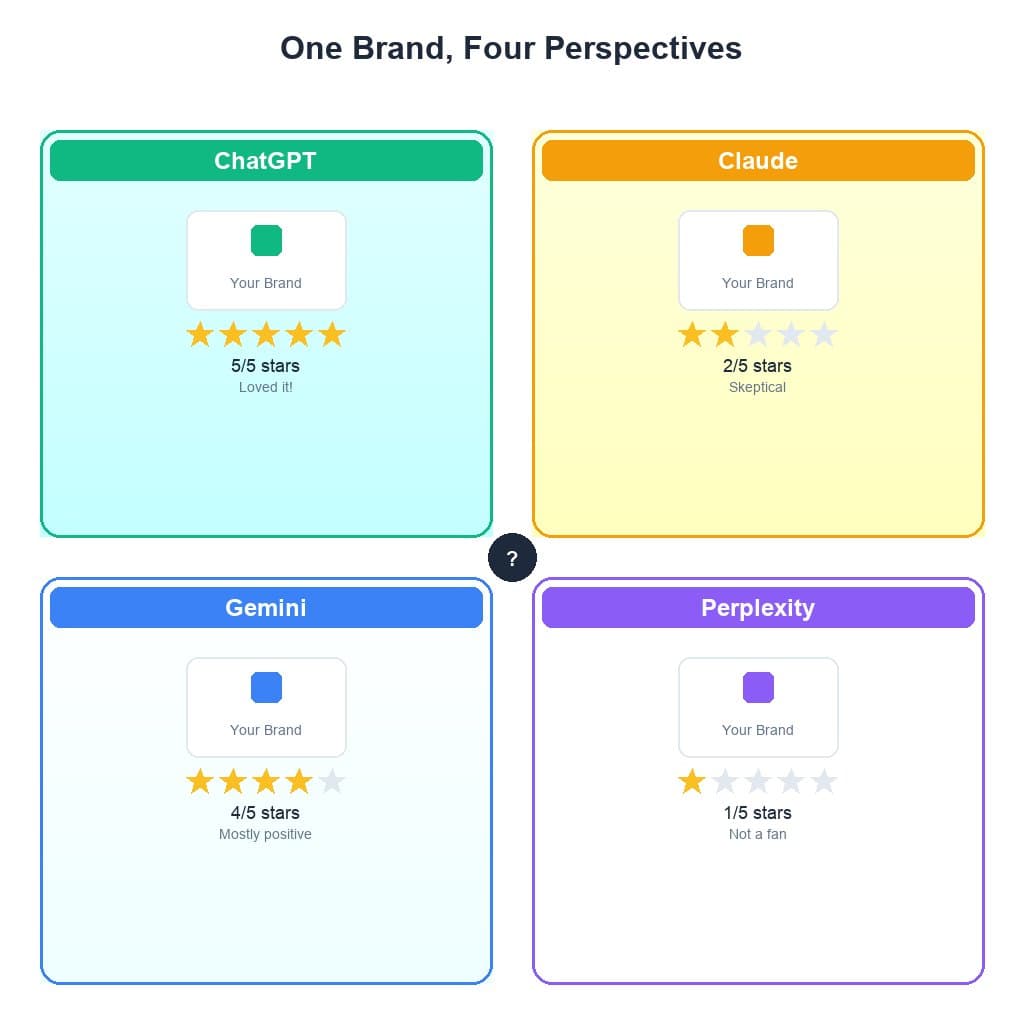

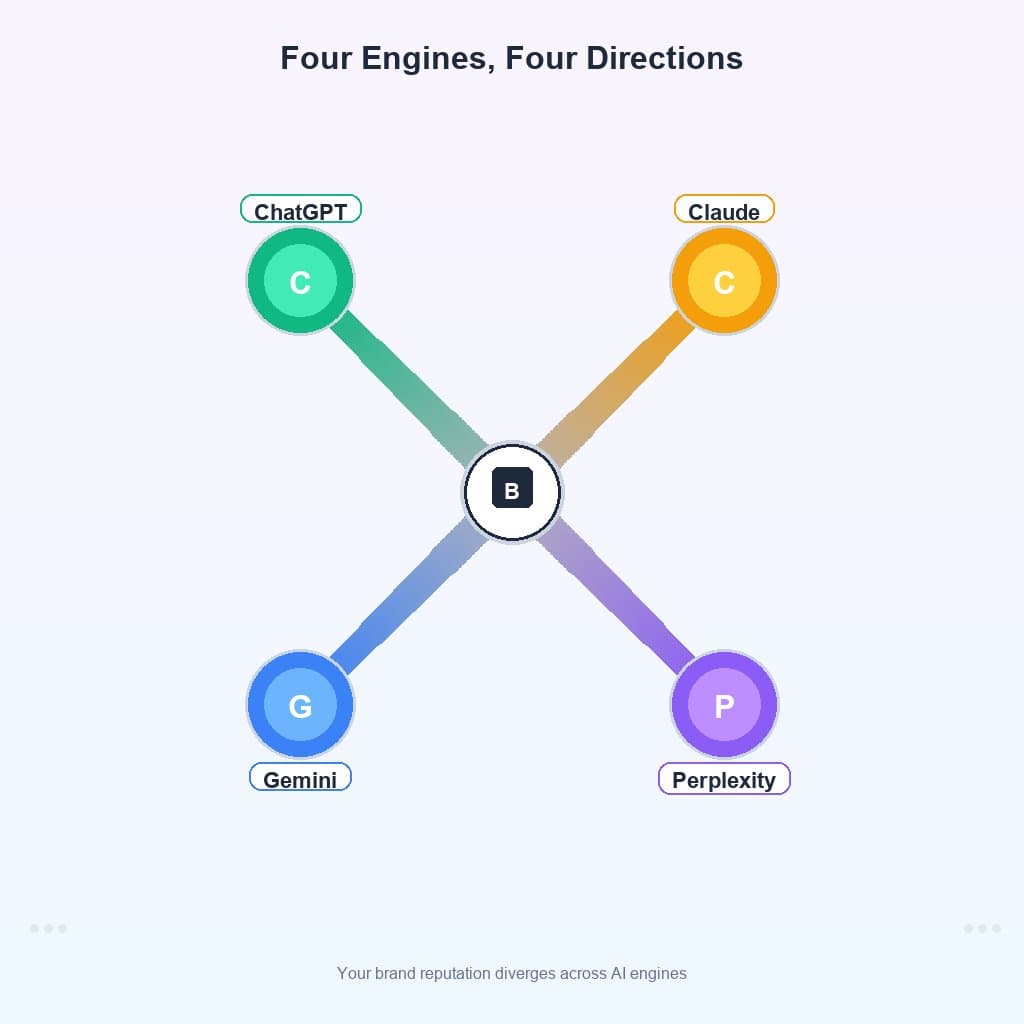

We analyzed 1,540 brands across 145 industries, querying all four major AI engines — ChatGPT, Claude, Gemini, and Perplexity — on the same prompts. The result was not a unified picture of brand perception. It was four wildly different pictures. Your brand doesn't have one AI reputation. It has four. And they often contradict each other.

This is the problem that multi-engine GEO (Generative Engine Optimization) was built to solve. If traditional SEO was about Google, and early GEO was about ChatGPT, multi-engine GEO is about the entire AI ecosystem — because that's where your customers are already looking.

The uncomfortable truth: Your brand's AI reputation isn't one number — it's four different numbers that can wildly contradict each other. If you're only monitoring one AI engine, you're navigating with 25% of the map. We wrote about this phenomenon first in our analysis of the split personality problem.

1,540

Brands Analyzed

across 145 industries

4

AI Engines

ChatGPT, Claude, Gemini, Perplexity

1.0

Max Sentiment Gap

loved by one, ignored by another

2x

Gemini Mention Lead

vs ChatGPT recommendation rate

The Four Reputations Problem

32.5% vs 15.6%. That's the gap between Gemini's mention rate and ChatGPT's. When we first saw this number, we assumed it was a data error. It wasn't. It's the defining characteristic of the AI search landscape in 2026: each engine lives in its own universe.

Our earlier research found engines disagree on 37% of brands. But this time, with a larger dataset, the picture is even more stark. The disagreement isn't just about whether you're mentioned — it's about how you're described, what sentiment is attached to your name, and whether you're positioned as a leader or an afterthought.

Consider Lululemon: on ChatGPT, it scores a perfect 1.0 sentiment. On Claude, it scores 0.0. Same brand. Same day. Same prompt. As we documented in our AI sentiment splits analysis, this isn't an edge case — it's the norm for brands with any significant AI presence.

The reason boils down to architecture: each AI engine is built on a fundamentally different information pipeline. According to SparkToro's research, the divergence between AI engines is growing, not shrinking, as each company invests in proprietary data sources. Gartner predicts that by 2027, AI-powered search will account for 25% of all search queries.

Brand Mention Rate by Engine

Percentage of brands each engine mentions when asked. Gemini is 2x more generous than ChatGPT.

Meet the Four Personalities

Claude cites 4.31 sources per mention. ChatGPT cites 0.65. That's a 6.6x difference in citation density — and it tells you everything about how each engine "thinks." Our head-to-head comparison breaks down the mechanics, but here's the framework:

ChatGPT: The Cautious Gatekeeper (15.6% mention rate)

ChatGPT is the stingiest engine. It mentions brands in only 15.6% of relevant queries, and when it does, the average sentiment is the lowest of any engine (0.090). Think of ChatGPT as the bouncer at an exclusive club — hard to get in, and even once inside, you're not guaranteed VIP treatment. For strategies to break through, see our guide on how to get recommended by ChatGPT.

Claude: The Academic with Receipts (24.4% mention rate)

Claude has the highest citation rate (41.5%) and provides an average of 4.31 citations per mention. It's the only engine that consistently backs up its recommendations with sources. If your brand has strong academic, research-backed, or expert content, Claude is your best friend. Our 2026 AI visibility report shows Claude steadily increasing its citation density quarter over quarter.

Gemini: The Generous Recommender (32.5% mention rate)

Gemini is the most brand-friendly engine by sheer volume. It assigns the "alternative" role to 19.7% of brands — 2.5x more than ChatGPT's 7.8%. When Gemini talks about your brand, the sentiment tends to be positive (0.163 avg vs 0.090 for ChatGPT). We explored this phenomenon in depth in Gemini's secret favorites.

Perplexity: The Real-Time Aggregator (24.2% mention rate)

Perplexity occupies the middle ground — moderate mention rate, moderate sentiment. But its real-time web search capability means it's the most responsive to fresh content. Publish a new case study or press release, and Perplexity may surface it within hours, while the other engines won't see it for months.

AI Engine Personality Fingerprints

Each engine has a distinct recommendation profile. Higher = more active on that dimension.

How Each Engine Assigns Your Role

Gemini assigns the "alternative" role 2.5x more often than ChatGPT (19.7% vs 7.8%). This matters enormously because the difference between "alternative" and "not mentioned" is the difference between being in the conversation and being invisible.

As we explored in The Alternative Trap, getting mentioned as an "alternative" isn't always good news — but it's infinitely better than not being mentioned at all. The path from alternative to primary recommendation follows specific content patterns we documented in From Alternative to Primary.

ChatGPT's 84.4% "not mentioned" rate is staggering. For every 100 queries where a brand could appear, ChatGPT stays silent on 84 of them. If you're building your GEO strategy around ChatGPT alone, you're optimizing for the engine that ignores brands the most.

How Each Engine Assigns Brand Roles

Gemini assigns 'alternative' 2.5x more often than ChatGPT. Percentage of all queries.

Strategic insight: The data reveals a clear hierarchy of opportunity. Gemini is the easiest engine to get mentioned on (32.5% mention rate), while ChatGPT is the hardest (15.6%). Start your GEO efforts where the door is most open, then work your way to the tighter gatekeepers. Brands in The 100% Club typically built their visibility by winning Gemini and Perplexity first.

The Sentiment Split: Same Brand, Different Opinions

Lululemon scores a sentiment of 1.0 on ChatGPT and 0.0 on Claude — in the same prompt context. This maximum possible divergence (range of 1.0) isn't unique to Lululemon. Brands like OKX, CFCS Cloud Solutions, and JCCPA all show the same pattern: one engine loves them, another completely disregards them.

Lululemon appeared 10 out of 20 times in our top sentiment divergence list — making it the brand with the most inconsistent AI reputation. In some queries, ChatGPT gives it a 1.0 while Claude gives it a 0.0. In other queries, the roles reverse entirely.

What causes this? Different training data snapshots, different weighting of brand signals, and different definitions of what makes a brand "good." ChatGPT may prioritize brand popularity, while Claude may focus on product quality signals. As noted by Search Engine Land, this fragmentation is accelerating as each AI company develops proprietary ranking signals.

Sentiment Divergence: Same Brand, Different Opinions

Sentiment scores (0 = negative/neutral, 1 = very positive) for brands with max engine disagreement.

Brand Reputation Consistency Scorecard

Sentiment scores across engines for brands with maximum disagreement (range = 1.0 means full swing).

| Brand | ChatGPT | Claude | Gemini | Perplexity | Range |

|---|---|---|---|---|---|

| Lululemon | 1.0 | 0.0 | 1.0 | 0.0 | 1.0 |

| OKX | 0.0 | 0.1 | 1.0 | 0.0 | 1.0 |

| CFCS Cloud | 1.0 | 0.0 | 0.8 | 0.0 | 1.0 |

| JCCPA | 0.0 | 1.0 | 0.8 | 1.0 | 1.0 |

Citation Patterns: The Source Trust Gap

Claude provides 4.31 citations per mention. ChatGPT provides just 0.65. This 6.6x citation gap reveals fundamentally different approaches to brand recommendation. Claude wants receipts. ChatGPT trusts its training data.

Perhaps the most surprising finding: Perplexity — marketed as "the answer engine with sources" — actually provides fewer citations per mention (1.97) than both Claude (4.31) and Gemini (3.42). This doesn't mean Perplexity's citations are less valuable — its real-time web search often surfaces more recent sources — but it challenges the assumption that Perplexity is the most citation-heavy engine.

For brands, this has direct implications: if you want Claude to recommend you, publish citable, authoritative content. Blog posts with original data, research reports, and case studies with concrete numbers are what Claude's citation engine rewards. We documented the full citation strategy in our coffee wars analysis, where brands with the most citations dominated their category.

Citation Patterns: Who Shows Their Sources?

Claude provides the most citations per mention (4.31 avg). ChatGPT barely cites at all.

Industry Winners and Losers

24 entire industries score 0% AI visibility. If your industry is Activewear, EdTech, Fintech, Mining, or SaaS, there's a chance no AI engine is mentioning any brand in your space. Meanwhile, Fashion Marketplace brands average 71.8% visibility and Cloud Storage sits at 66.8%.

The gap between the most visible and least visible industries is enormous. Fashion Marketplace (71.8%) is infinite percent higher than the 24 industries with 0% visibility. But even within the middle tier, the differences are meaningful: Coffee Chain (27.8%) vs. Project Management (11.4%) represents a 2.4x gap.

This matters for competitive strategy. In a low-visibility industry like skincare, becoming the first brand AI engines recommend is a massive first-mover advantage. In a high-visibility industry, the battle is about quality of mention, not just presence. McKinsey's analysis of AI-powered purchase decisions confirms that being the first recommendation drives 3x more conversions than being listed as an alternative.

Only one industry — Investment Banking — has a negative average sentiment (-0.043), making it the only sector where AI engines are actively hostile to brands. Every other industry, even those with low visibility, at least gets neutral-to-positive treatment when mentioned.

Industry AI Visibility Spectrum

Average visibility score across all 4 engines. 24 industries score 0% — completely invisible to AI.

How Often Do All 4 Engines Agree?

Only 8% of brands are mentioned by all 4 engines. The vast majority — 24% — aren't mentioned by any engine at all. Another 22% are mentioned by only one engine, meaning their entire AI visibility hangs by a thread.

This fragmentation is why single-engine monitoring is dangerous. If you check your brand on ChatGPT and it's visible, you might be one of the 22% that's only visible on one engine. The other three — which together represent roughly 75% of AI-driven discovery — have never heard of you.

The brands in the true "agreement zone" — those mentioned positively by all 4 engines — share specific characteristics we identified in The 100% Club analysis: strong Wikipedia presence, consistent NAP (Name, Address, Phone) data, Schema.org structured markup, and a history of being cited by authoritative publications.

How Often Do Engines Agree?

Of brands with at least some visibility, only 8% are mentioned by all 4 engines.

The Multi-Engine GEO Framework

Based on our data, here's the framework for building visibility across all four engines:

Step 1: Audit All Four Engines

Before you optimize anything, you need to know where you stand. Check your brand across all 4 engines to get your baseline scores. Document your mention rate, sentiment, role, and citation count per engine. This audit is the foundation of your multi-engine GEO strategy.

Step 2: Map Each Engine's Data Preferences

Each engine rewards different types of content:

- ChatGPT: Prioritize brand authority signals — Wikipedia presence, press coverage, consistent branding across the web. ChatGPT's training data favors brands with established digital footprints.

- Claude: Publish citable, data-rich content. Case studies with real numbers, original research, and comprehensive guides. Claude's 4.31 avg citations means it actively looks for sources to reference.

- Gemini: Leverage Google's structured data ecosystem. Keep your Google Business Profile updated, use Schema.org markup, and maintain consistent entity data across Google's knowledge graph.

- Perplexity: Focus on real-time web presence. Perplexity does live search, so fresh blog posts, recent press releases, and up-to-date product pages matter more here than on any other engine.

Step 3: Prioritize by Opportunity

Our data shows a clear hierarchy of difficulty. Start where the return is highest:

- Win Gemini first (32.5% mention rate — the most accessible engine)

- Capture Claude and Perplexity (24.4% and 24.2% — moderate difficulty)

- Crack ChatGPT last (15.6% — the hardest gatekeeper)

Step 4: Monitor Continuously

AI engine behavior changes. We've documented significant shifts in engine recommendation patterns over just 30-day periods. What worked last month may not work next month. Set up regular audits — monthly at minimum — to track your multi-engine visibility over time.

Pro tip: The most successful brands in our dataset don't try to game individual engines. They build genuine authority signals — original research, expert content, strong backlink profiles — that naturally resonate across all four engines. Multi-engine GEO isn't about tricks; it's about building real authority that every AI can recognize.

Frequently Asked Questions

What is multi-engine GEO?

Multi-engine GEO is the practice of optimizing your brand's visibility across all major AI engines — ChatGPT, Claude, Gemini, and Perplexity — rather than focusing on just one. Each engine has different data sources, recommendation patterns, and biases, so a single-engine strategy leaves 75% of AI-driven discovery unaddressed.

Why does my brand have different reputations across AI engines?

Each AI engine is trained on different data, uses different retrieval methods, and has different biases. ChatGPT relies on training data, Perplexity does real-time web search, Claude prioritizes citation-heavy academic sources, and Gemini leverages Google's knowledge graph. These different data pipelines create different brand perceptions.

Which AI engine recommends brands most often?

Gemini at 32.5%, followed by Claude (24.4%), Perplexity (24.2%), and ChatGPT (15.6%). But volume isn't everything — the quality and sentiment of mentions vary dramatically between engines.

Can a brand be loved by ChatGPT but ignored by Claude?

Absolutely. Lululemon scores 1.0 sentiment on ChatGPT and 0.0 on Claude in the same query context. This maximum divergence (range = 1.0) affects about 15% of brands in our dataset.

How do I check my brand across all 4 AI engines?

Use GeoBuddy's free brand check to scan your brand across all four engines simultaneously. You'll get per-engine visibility scores, sentiment analysis, role assignments, and actionable recommendations.

What is the best GEO strategy for 2026?

Audit all 4 engines, identify gaps, and optimize for each engine's unique preferences: structured data for Gemini, citation-worthy content for Claude, real-time web presence for Perplexity, and authoritative signals for ChatGPT.